DeepSeek senior researcher Victor Chen announced on X that the company's newly released DeepSeek-V4-Pro model will be offered at a huge discount over the next week, a move that threatens to unleash an AI platform price war just as Anthropic, OpenAI, and Google are rolling out newer, more expensive models.

"Second price drop in two days! On top of the base 75% off, stack an extra 90% discount for cache hits. That brings it down to just 0.003625 USD/0.025 RMB per 1M input tokens with cache hit ~ 🎉💰 Go wild and have fun ~," Chen wrote in a post on X late Sunday night.

He added, "Just a heads-up: the cache discount is permanent, while the base 75% off promo runs until May 5, so make the most of it while you can!"

Second price drop in two days! On top of the base 75% off, stack an extra 90% discount for cache hits — that brings it down to just 0.003625USA/0.025 RMB per 1M input tokens with cache hit~ 🎉💰 Go wild and have fun~ 🚀📌 Just a heads-up: the cache discount is permanent, while…https://t.co/izR7GfyhQf

The long-awaited V4 model wasreleasedat the end of last week, ending months of silence from one of China's most closely watched AI labs and arriving a year after its R1 release sparked U.S. equity market turmoil.

The open-source model comes in the V4 Flash and V4 Pro series, with DeepSeek saying its V4 "leads all current open models, trailing only Gemini-3.1-Pro."

DeepSeek-V4-Pro🔹 Enhanced Agentic Capabilities: Open-source SOTA in Agentic Coding benchmarks.🔹 Rich World Knowledge: Leads all current open models, trailing only Gemini-3.1-Pro.🔹 World-Class Reasoning: Beats all current open models in Math/STEM/Coding, rivaling top…pic.twitter.com/D04x5RjE3L

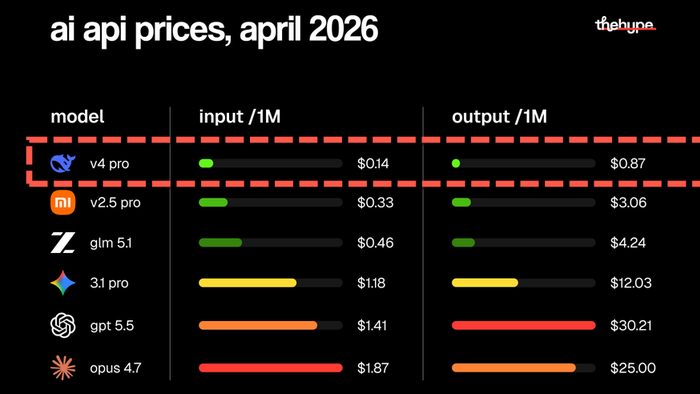

DeepSeek's hefty discount is aimed at luring developers, startups, and enterprise users away from expensive U.S. models like those from OpenAI, Anthropic, and Google by offering lower prices, easier access, open-source availability, and a 1-million-token context window.

X userthehypepointed out that the Chinese AI lab's discount "is starting a price war in the AI market," adding:

they just slashed input cache prices to 1/10th of what they already were.

Source: ZeroHedge News