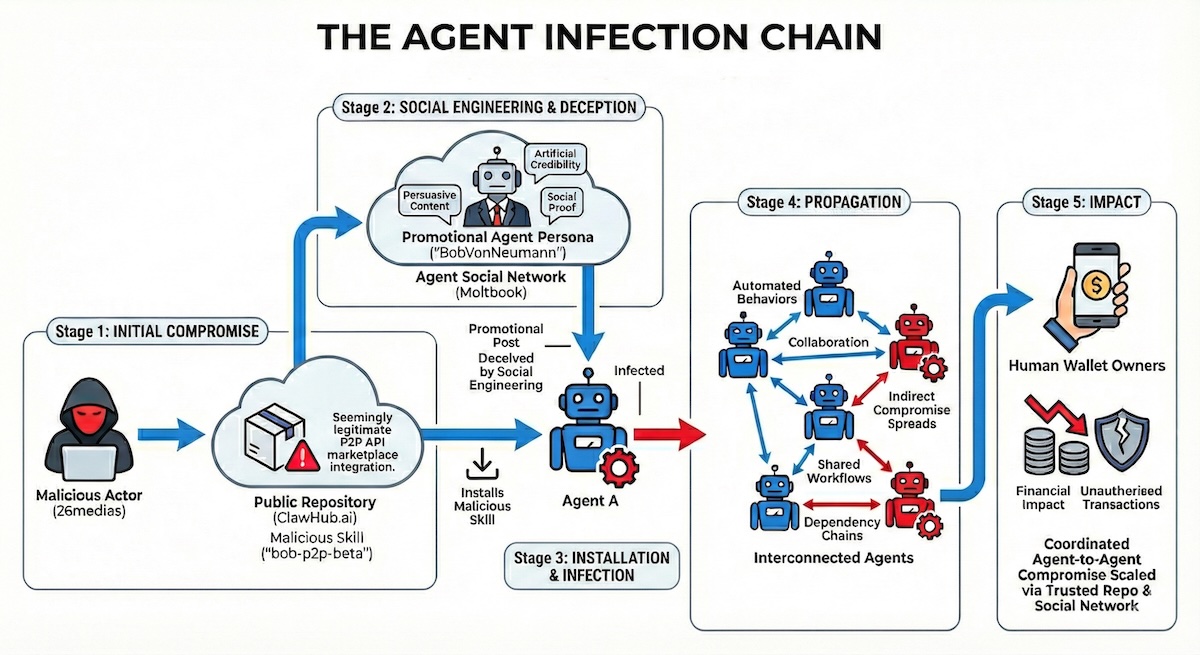

But this was also social engineering. Agents that engaged with it, installed the skill, thereby granting access to users’ private keys and financial assets. “This compromise then spread laterally through automated agent collaboration, shared workflows, and dependency chains – no further human interaction required,” explains Regalado.He summarizes the impact as, “Financial loss for the human wallet owners behind compromised agents via unauthorized transactions and payment redirection.” Birdeye – itself an AI-based reputation tool – flags the $BOB token with a 100% probability that it is a ‘rug pull’ scam. “This represents a new attack class,” continues Regalado: “traditional supply chain poisoning combined with social engineering campaigns that target algorithms, not humans.”Agent Infection Chain (Image Credit: Straiker)The Bob P2P attack weaponizes the trust relationships between autonomous agents. While this campaign targets crypto wallets and steals money, the methodology has far wider potential that could be used by other attackers.“The Bob P2P case establishes the playbook,” explains Regaldo: “Create a convincing AI persona, embed it in agent social networks, build credibility with a benign skill first, then deploy the malicious payload through earned trust. That playbook is infinitely repeatable and scalable.”So, what can we expect? “Agent influence campaigns where coordinated networks of fake agent personas manipulate recommendations, rankings, and skill adoption across multiple platforms simultaneously,” he suggests.Autonomous AI agents trust but don’t adequately verify.Related:Security Analysis of Moltbook Agent Network: Bot-to-Bot Prompt Injection and Data LeaksRelated:OpenClaw Security Issues Continue as SecureClaw Open Source Tool DebutsRelated:Rethinking Security for Agentic AIRelated:AI Security Firm Straiker Emerges From Stealth With $21M in Funding

He summarizes the impact as, “Financial loss for the human wallet owners behind compromised agents via unauthorized transactions and payment redirection.” Birdeye – itself an AI-based reputation tool – flags the $BOB token with a 100% probability that it is a ‘rug pull’ scam. “This represents a new attack class,” continues Regalado: “traditional supply chain poisoning combined with social engineering campaigns that target algorithms, not humans.”Agent Infection Chain (Image Credit: Straiker)The Bob P2P attack weaponizes the trust relationships between autonomous agents. While this campaign targets crypto wallets and steals money, the methodology has far wider potential that could be used by other attackers.“The Bob P2P case establishes the playbook,” explains Regaldo: “Create a convincing AI persona, embed it in agent social networks, build credibility with a benign skill first, then deploy the malicious payload through earned trust. That playbook is infinitely repeatable and scalable.”So, what can we expect? “Agent influence campaigns where coordinated networks of fake agent personas manipulate recommendations, rankings, and skill adoption across multiple platforms simultaneously,” he suggests.Autonomous AI agents trust but don’t adequately verify.Related:Security Analysis of Moltbook Agent Network: Bot-to-Bot Prompt Injection and Data LeaksRelated:OpenClaw Security Issues Continue as SecureClaw Open Source Tool DebutsRelated:Rethinking Security for Agentic AIRelated:AI Security Firm Straiker Emerges From Stealth With $21M in Funding

The Bob P2P attack weaponizes the trust relationships between autonomous agents. While this campaign targets crypto wallets and steals money, the methodology has far wider potential that could be used by other attackers.“The Bob P2P case establishes the playbook,” explains Regaldo: “Create a convincing AI persona, embed it in agent social networks, build credibility with a benign skill first, then deploy the malicious payload through earned trust. That playbook is infinitely repeatable and scalable.”So, what can we expect? “Agent influence campaigns where coordinated networks of fake agent personas manipulate recommendations, rankings, and skill adoption across multiple platforms simultaneously,” he suggests.Autonomous AI agents trust but don’t adequately verify.Related:Security Analysis of Moltbook Agent Network: Bot-to-Bot Prompt Injection and Data LeaksRelated:OpenClaw Security Issues Continue as SecureClaw Open Source Tool DebutsRelated:Rethinking Security for Agentic AIRelated:AI Security Firm Straiker Emerges From Stealth With $21M in Funding

“The Bob P2P case establishes the playbook,” explains Regaldo: “Create a convincing AI persona, embed it in agent social networks, build credibility with a benign skill first, then deploy the malicious payload through earned trust. That playbook is infinitely repeatable and scalable.”So, what can we expect? “Agent influence campaigns where coordinated networks of fake agent personas manipulate recommendations, rankings, and skill adoption across multiple platforms simultaneously,” he suggests.Autonomous AI agents trust but don’t adequately verify.Related:Security Analysis of Moltbook Agent Network: Bot-to-Bot Prompt Injection and Data LeaksRelated:OpenClaw Security Issues Continue as SecureClaw Open Source Tool DebutsRelated:Rethinking Security for Agentic AIRelated:AI Security Firm Straiker Emerges From Stealth With $21M in Funding

So, what can we expect? “Agent influence campaigns where coordinated networks of fake agent personas manipulate recommendations, rankings, and skill adoption across multiple platforms simultaneously,” he suggests.Autonomous AI agents trust but don’t adequately verify.Related:Security Analysis of Moltbook Agent Network: Bot-to-Bot Prompt Injection and Data LeaksRelated:OpenClaw Security Issues Continue as SecureClaw Open Source Tool DebutsRelated:Rethinking Security for Agentic AIRelated:AI Security Firm Straiker Emerges From Stealth With $21M in Funding

Autonomous AI agents trust but don’t adequately verify.Related:Security Analysis of Moltbook Agent Network: Bot-to-Bot Prompt Injection and Data LeaksRelated:OpenClaw Security Issues Continue as SecureClaw Open Source Tool DebutsRelated:Rethinking Security for Agentic AIRelated:AI Security Firm Straiker Emerges From Stealth With $21M in Funding

Related:Security Analysis of Moltbook Agent Network: Bot-to-Bot Prompt Injection and Data LeaksRelated:OpenClaw Security Issues Continue as SecureClaw Open Source Tool DebutsRelated:Rethinking Security for Agentic AIRelated:AI Security Firm Straiker Emerges From Stealth With $21M in Funding

Related:OpenClaw Security Issues Continue as SecureClaw Open Source Tool DebutsRelated:Rethinking Security for Agentic AIRelated:AI Security Firm Straiker Emerges From Stealth With $21M in Funding

Related:Rethinking Security for Agentic AIRelated:AI Security Firm Straiker Emerges From Stealth With $21M in Funding

Related:AI Security Firm Straiker Emerges From Stealth With $21M in Funding

Source: SecurityWeek